You are not logged in.

- Topics: Active | Unanswered

#1 2021-08-12 08:44:21

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

No sound over HDMI when selecting 120hz refresh rate

Hey everyone,

I just got a new GPU for my desktop that supports 4k 120hz.

However, when I select the 120hz option, there is no sound over HDMI anymore.

Once I switch back to 60hz, the sound works again.

EDIT: I got a cable that supports 48Gbits, so 4k 120hz should work!

Any suggestions?

Thanks!

Last edited by omniscient_potato (2021-08-12 08:45:21)

Offline

#2 2021-08-12 13:33:08

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

Try a custom modeline (reduced blanking)

# 3840x2160 @ 120.000 Hz Reduced Blank (CVT) field rate 119.999 Hz; hsync: 274.438 kHz; pclk: 1097.75 MHz

Modeline "3840x2160_120.00_rb1" 1097.75 3840 3888 3920 4000 2160 2163 2168 2287 +hsync -vsynchdmi … cable … should work!

If I had a penny for every time I heard that statement…

Ok, I guess I had about $5, so not all that impressive in the end ;-)

Online

#3 2021-08-12 14:07:14

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

Thanks for the reply!

I'll try your suggestion and let you know if it works!

The HDMI should really not be the problem, as in Windows 4K 120hz works perfectly fine, but I get your scepticism;)

Offline

#4 2021-08-14 08:07:10

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

So, I've given your suggestion a try, but haven't gotten it to work...

When I add the modeline via xrandr, I get

X Error of failed request: BadMatch (invalid parameter attributes)

Major opcode of failed request: 140 (RANDR)

Minor opcode of failed request: 18 (RRAddOutputMode)

Serial number of failed request: 61

Current serial number in output stream: 62 once I try adding it to the correct output.

EDIT: I've tried to set the correct VertRefresh and HorizSync values, but that didn't solve it...

When I do it via xorg, It adds the modeline to a HDMI-1-1 (which does not exist on the GPU) instead of the actual HDMI-0, even when I specify the identifier.

Maybe I've done something wrong after all?

Last edited by omniscient_potato (2021-08-14 08:08:06)

Offline

#5 2021-08-14 08:17:09

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

https://wiki.archlinux.org/title/Xrandr … esolutions

Reboot (or restart X11, just get a clean slate) and post the output of "xrandr -q" (and also the commands you tried to add the modeline)

Online

#6 2021-10-04 11:48:24

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

So, I did some more research, and it turns out it could be due to pixel clock limitations in the proprietary Nvidia driver. I installed nouveau and added the kernel parameter according to this. Now I can add the resolution to my video output, but once I actually select it, I get no signal anymore.

Offline

#7 2021-10-04 14:15:51

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

See /usr/share/doc/nvidia/README, you could try

Option "ModeValidation" "NoMaxPClkCheckOnline

#8 2021-10-04 15:55:45

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

With the proprietary or the nouveau drivers?

Offline

#9 2021-10-04 21:07:55

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

pacman -Qo /usr/share/doc/nvidia/README

(It's for the proprietary driver)

Online

#10 2021-10-05 09:29:41

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

I tried that, but the problem remains. For the nouveau driver, there is no signal anymore once I add the custom resolution, and for the nvidia driver, I still get no sound with 4K 120Hz but resolution and refresh rate work.

Offline

#11 2021-10-05 13:56:47

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

Did you ensure to have switched to the mode w/ reduced blanking?

What's the output of "xrandr -q"? Do you have other frequencies available (eg. 100 Hz)?

The signal w/ reduced blanking is still well above 60Hz (@712.75 MHz)

Are you sure the signal on windows isn't interlaced? (what would easily knock down the signal into the 60Hz range)

Online

#12 2021-10-05 15:16:04

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

thats that xrandr -q spits out before trying to add the mode to the output which fails with

X Error of failed request: BadMatch (invalid parameter attributes)

Major opcode of failed request: 140 (RANDR)

Minor opcode of failed request: 18 (RRAddOutputMode)

Serial number of failed request: 41

Current serial number in output stream: 42Screen 0: minimum 8 x 8, current 3840 x 2160, maximum 32767 x 32767

HDMI-0 connected primary 3840x2160+0+0 (normal left inverted right x axis y axis) 1600mm x 900mm

3840x2160 60.00*+ 119.88 100.00 59.94 50.00 29.97 25.00 23.98

4096x2160 119.88 100.00 59.94 50.00 29.97 25.00 24.00 23.98

2560x1440 120.00

1920x1080 119.88 100.00 60.00 59.94 50.00 29.97 25.00 23.98

1360x768 60.02

1280x1024 60.02

1280x720 59.94 50.00

1152x864 60.00

1024x768 60.00

800x600 60.32

720x576 50.00

720x480 59.94

640x480 59.95 59.94 59.93

DP-0 disconnected (normal left inverted right x axis y axis)

DP-1 disconnected (normal left inverted right x axis y axis)

DP-2 disconnected (normal left inverted right x axis y axis)

DP-3 disconnected (normal left inverted right x axis y axis)

DP-4 disconnected (normal left inverted right x axis y axis)

DP-5 disconnected (normal left inverted right x axis y axis)

3840x2160_120.00_rb1 (0x215) 1097.750MHz +HSync -VSync

h: width 3840 start 3888 end 3920 total 4000 skew 0 clock 274.44KHz

v: height 2160 start 2163 end 2168 total 2287 clock 120.00HzI'll check what windows says about that, but actually reduced blanking should not be necessary, both GPU (HDMI 2.1) and monitor support these rates. Thats what the xorg log says about the monitor:

[ 429.310] (--) NVIDIA(GPU-0): LG Electronics LG TV (DFP-0): 42666.7 MHz maximum pixel clock Offline

#13 2021-10-05 15:27:22

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

I'm not worried about GPU and also not the monitor, my concern is the cable.

Since adding the resolution still produces errors (despite "NoMaxPClkCheck"? xorg log? - there's a typo and an open quote in my post, sorry about that) you didn't test the lower clock and we know that the audio doesn't make it on 120Hz

Online

#14 2021-10-06 17:57:03

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

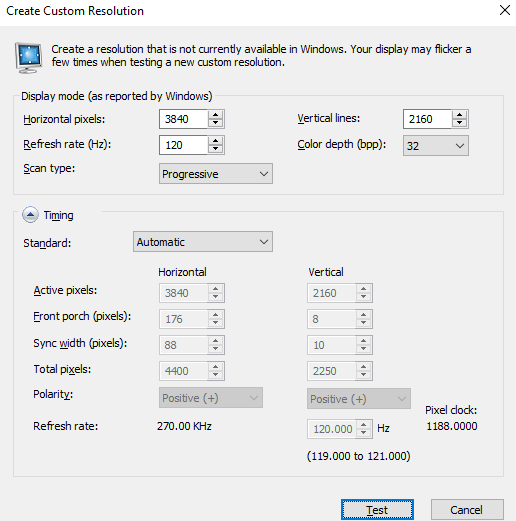

Here is what resolution works on windows. When I click on "test" both resolution, refresh rate and audio works, so I guess the cable works.

Offline

#15 2021-10-06 18:15:52

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

That pixel clock uses some sort of reduced blanking and is significantly lower than the 1498.25 MHz you're currently trying to use.

(cvt12 produces 1075.80 MHz for "-b" and 1097.75 MHz for "-r", windows has 270KHz instead of ~278 resp ~275 for reduced blanking modes)

Online

#16 2021-10-06 18:57:30

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

I tried the reduced blanking version, but now we're back at the problem of not being able to add the custom resolution to the output with the NVIDIA driver ![]()

Offline

#17 2021-10-06 19:09:13

- progandy

- Member

- Registered: 2012-05-17

- Posts: 5,307

Re: No sound over HDMI when selecting 120hz refresh rate

Edit: Sorry, missed you last post.

This calculator shows the bandwidth utilization of the different blanking schemes:

https://tomverbeure.github.io/video_timings_calculator

If everything supports the 42GBit/s, then even with normal CVT ~10% should be free for audio. No idea what the problem is. If something is limited to 35GBit/s, then CVT is maxing out the link.

If you want to try the exact windows modeline: (source: https://www.improwis.com/tables/video.w … pmodeline)

modeline "name" [pixelMHz] \

[hres] [hres+hfrontporch] [hres+hfrontporch+hsyncw] [htotal] \

[vres] [vres+vfrontporch] [vres+vfrontporch+vsyncw] [vtotal] \

[flags]modeline "4K120Win" 1188.00 \

3840 4016 4104 4400 \

2160 2168 2178 2250 \

+hsync +vsyncLast edited by progandy (2021-10-06 19:19:58)

| alias CUTF='LANG=en_XX.UTF-8@POSIX ' | alias ENGLISH='LANG=C.UTF-8 ' |

Offline

#18 2021-10-06 19:34:16

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

Add

Option "ModeValidation" "AllowNonEdidModes"And add the exact I/O (commands and output) when you try to add the modeline.

Online

#19 2021-10-07 08:30:16

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

I added the EDID option and rebooted. This is what I get:

cvt 3840 2160 120

# 3840x2160 119.98 Hz (CVT) hsync: 277.87 kHz; pclk: 1498.25 MHz

Modeline "3840x2160_120.00" 1498.25 3840 4192 4616 5392 2160 2163 2168 2316 -hsync +vsyncxrandr --newmode "3840x2160_120.00" 1498.25 3840 4192 4616 5392 2160 2163 2168 2316 -hsync +vsyncxrandr --addmode HDMI-0 3840x2160_120.00

X Error of failed request: BadMatch (invalid parameter attributes)

Major opcode of failed request: 140 (RANDR)

Minor opcode of failed request: 18 (RRAddOutputMode)

Serial number of failed request: 41

Current serial number in output stream: 42EDIT: tried the windows resolution and the reduced blanking version, both with the same result.

Last edited by omniscient_potato (2021-10-07 08:36:00)

Offline

#20 2021-10-07 14:09:17

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

Option "ModeValidation" "string"

This option provides fine-grained control over each stage of the mode

validation pipeline, disabling individual mode validation checks. This

option should only very rarely be used.

The option string is a semicolon-separated list of comma-separated lists

of mode validation arguments. Each list of mode validation arguments can

optionally be prepended with a display device name and GPU specifier.

"<dpy-0>: <tok>, <tok>; <dpy-1>: <tok>, <tok>, <tok>; ..."

Possible arguments:

o "NoMaxPClkCheck": each mode has a pixel clock; this pixel clock is

validated against the maximum pixel clock of the hardware (for a DFP,

this is the maximum pixel clock of the TMDS encoder, for a CRT, this

is the maximum pixel clock of the DAC). This argument disables the

maximum pixel clock checking stage of the mode validation pipeline.

o "NoEdidMaxPClkCheck": a display device's EDID can specify the maximum

pixel clock that the display device supports; a mode's pixel clock is

validated against this pixel clock maximum. This argument disables

this stage of the mode validation pipeline.

o "NoMaxSizeCheck": each NVIDIA GPU has a maximum resolution that it

can drive; this argument disables this stage of the mode validation

pipeline.

o "NoHorizSyncCheck": a mode's horizontal sync is validated against the

range of valid horizontal sync values; this argument disables this

stage of the mode validation pipeline.

o "NoVertRefreshCheck": a mode's vertical refresh rate is validated

against the range of valid vertical refresh rate values; this

argument disables this stage of the mode validation pipeline.

o "NoVirtualSizeCheck": if the X configuration file requests a specific

virtual screen size, a mode cannot be larger than that virtual size;

this argument disables this stage of the mode validation pipeline.

o "NoVesaModes": when constructing the mode pool for a display device,

the X driver uses a built-in list of VESA modes as one of the mode

sources; this argument disables use of these built-in VESA modes.

o "NoEdidModes": when constructing the mode pool for a display device,

the X driver uses any modes listed in the display device's EDID as

one of the mode sources; this argument disables use of EDID-specified

modes.

o "NoXServerModes": when constructing the mode pool for a display

device, the X driver uses the built-in modes provided by the core

XFree86/Xorg X server as one of the mode sources; this argument

disables use of these modes. Note that this argument does not disable

custom ModeLines specified in the X config file; see the

"NoCustomModes" argument for that.

o "NoCustomModes": when constructing the mode pool for a display

device, the X driver uses custom ModeLines specified in the X config

file (through the "Mode" or "ModeLine" entries in the Monitor

Section) as one of the mode sources; this argument disables use of

these modes.

o "NoPredefinedModes": when constructing the mode pool for a display

device, the X driver uses additional modes predefined by the NVIDIA X

driver; this argument disables use of these modes.

o "NoUserModes": additional modes can be added to the mode pool

dynamically, using the NV-CONTROL X extension; this argument

prohibits user-specified modes via the NV-CONTROL X extension.

o "NoExtendedGpuCapabilitiesCheck": allow mode timings that may exceed

the GPU's extended capability checks.

o "ObeyEdidContradictions": an EDID may contradict itself by listing a

mode as supported, but the mode may exceed an EDID-specified valid

frequency range (HorizSync, VertRefresh, or maximum pixel clock).

Normally, the NVIDIA X driver prints a warning in this scenario, but

does not invalidate an EDID-specified mode just because it exceeds an

EDID-specified valid frequency range. However, the

"ObeyEdidContradictions" argument instructs the NVIDIA X driver to

invalidate these modes.

o "NoTotalSizeCheck": allow modes in which the individual visible or

sync pulse timings exceed the total raster size.

o "NoDualLinkDVICheck": for mode timings used on dual link DVI DFPs,

the driver must perform additional checks to ensure that the correct

pixels are sent on the correct link. For some of these checks, the

driver will invalidate the mode timings; for other checks, the driver

will implicitly modify the mode timings to meet the GPU's dual link

DVI requirements. This token disables this dual link DVI checking.

o "NoDisplayPortBandwidthCheck": for mode timings used on DisplayPort

devices, the driver must verify that the DisplayPort link can be

configured to carry enough bandwidth to support a given mode's pixel

clock. For example, some DisplayPort-to-VGA adapters only support 2

DisplayPort lanes, limiting the resolutions they can display. This

token disables this DisplayPort bandwidth check.

o "AllowNon3DVisionModes": modes that are not optimized for NVIDIA 3D

Vision are invalidated, by default, when 3D Vision (stereo mode 10)

or 3D Vision Pro (stereo mode 11) is enabled. This token allows the

use of non-3D Vision modes on a 3D Vision monitor. (Stereo behavior

of non-3D Vision modes on 3D Vision monitors is undefined.)

o "AllowNonHDMI3DModes": modes that are incompatible with HDMI 3D are

invalidated, by default, when HDMI 3D (stereo mode 12) is enabled.

This token allows the use of non-HDMI 3D modes when HDMI 3D is

selected. HDMI 3D will be disabled when a non-HDMI 3D mode is in use.

o "AllowNonEdidModes": if a mode is not listed in a display device's

EDID mode list, then the NVIDIA X driver will discard the mode if the

EDID 1.3 "GTF Supported" flag is unset, if the EDID 1.4 "Continuous

Frequency" flag is unset, or if the display device is connected to

the GPU by a digital protocol (e.g., DVI, DP, etc). This token

disables these checks for non-EDID modes.

o "NoEdidHDMI2Check": HDMI 2.0 adds support for 4K@60Hz modes with

either full RGB 4:4:4 pixel encoding or YUV (also known as YCbCr)

4:2:0 pixel encoding. Using these modes with RGB 4:4:4 pixel encoding

requires GPU support as well as display support indicated in the

display device's EDID. This token allows the use of these modes at

RGB 4:4:4 as long as the GPU supports them, even if the display

device's EDID does not indicate support. Otherwise, these modes will

be displayed in the YUV 4:2:0 color space.

o "AllowDpInterlaced": When driving interlaced modes over DisplayPort

protocol, NVIDIA GPUs do not provide all the spec-mandated metadata.

Some DisplayPort monitors are tolerant of this missing metadata. But,

in the interest of DisplayPort specification compliance, the NVIDIA

driver prohibits interlaced modes over DisplayPort protocol by

default. Use this mode validation token to allow interlaced modes

over DisplayPort protocol anyway.

Examples:

Option "ModeValidation" "NoMaxPClkCheck"

disable the maximum pixel clock check when validating modes on all display

devices.

Option "ModeValidation" "CRT-0: NoEdidModes, NoMaxPClkCheck;

GPU-0.DFP-0: NoVesaModes"

do not use EDID modes and do not perform the maximum pixel clock check on

CRT-0, and do not use VESA modes on DFP-0 of GPU-0.Skip all checks and allow everything…

Online

#21 2021-10-07 14:21:57

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

What do you mean by that?

Offline

#22 2021-10-07 14:23:23

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

Add all relevant ModeValidation's in a comma separated list (see the posted README excerpt) and try again.

Online

#23 2021-10-14 15:45:07

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

Put this in my xorg config

Option "ModeValidation" "AllowNonEdidModes, NoMaxPClkCheck, NoEdidHDMI2Check, AllowNon3DVisionModes, NoDisplayPortBandwidthCheck, NoDualLinkDVICheck, NoTotalSizeCheck, NoExtendedGpuCapabilitiesCheck, NoEdidMaxPClkCheck, NoMaxSizeCheck"but still the same error when trying to add the new resolution.

Offline

#24 2021-10-15 10:35:27

- seth

- Member

- From: Won't reply 2 private help req

- Registered: 2012-09-03

- Posts: 74,931

Re: No sound over HDMI when selecting 120hz refresh rate

This user seems to have success adding the modeline to the config as well, rather than trying to inject it using xrandr: https://bbs.archlinux.org/viewtopic.php?id=270410

Online

#25 2021-10-26 16:11:22

- omniscient_potato

- Member

- Registered: 2020-07-12

- Posts: 63

Re: No sound over HDMI when selecting 120hz refresh rate

Unfortunately, this did not work either.

This is the relevant part of my xorg config:

Section "Monitor"

Identifier "Monitor0"

VendorName "Unknown"

ModelName "Unknown"

Option "DPMS"

Modeline "3840x2160_120.00_rb1" 1097.75 3840 3888 3920 4000 2160 2163 2168 2287 +hsync -vsync

Option "PreferredMode" "3840x2160_120.00_rb1"

EndSection

Section "Device"

Identifier "Device0"

Driver "nvidia"

VendorName "NVIDIA Corporation"

Option "ModeValidation" "AllowNonEdidModes, NoMaxPClkCheck, NoEdidHDMI2Check, AllowNon3DVisionModes, NoDisplayPortBandwidthCheck, NoDualLinkDVICheck, NoTotalSizeCheck, NoExtendedGpuCapabilitiesCheck, NoEdidMaxPClkCheck, NoMaxSizeCheck"

EndSectionOffline